AccuKnox Splunk App¶

Introduction¶

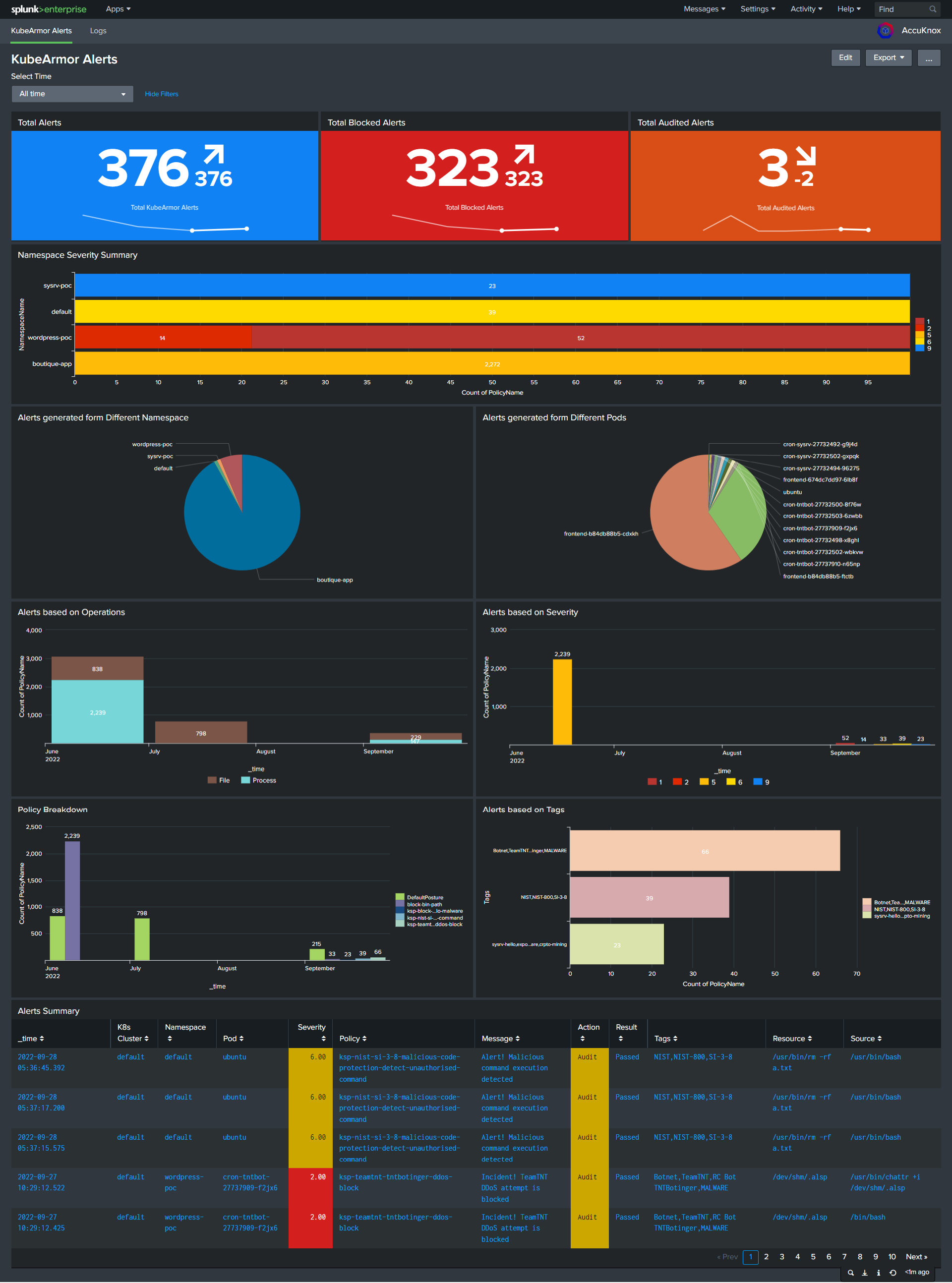

The AccuKnox Splunk App is designed to deliver operational reporting as well as a simplified and configurable dashboard. Users can view the real-time alerts in form of logs and telemetries.

Important features

-

Dashboard to track the real time alerts genrated from K8s cluster.

-

Data models with pivots for easy access to data and visualization.

-

Filter out the Alerts based on defferent namespaces, pods, operations, severity, tags and the actions of policies.

-

Drilldown ability to see how the alerts genrated, what policy was violated and what was the result for the same.

Installation¶

Prerequisites :¶

1. K8s Cluster with AccuKnox agents installed, up and running fine. KubeArmor and Feeder Service are mandatory. The environment variable for the feeder is set for the K8s cluster in use.

2. An active Splunk Deployment and Access to the same.

To deploy Splunk on a Kubernetes Cluster, follow https://splunk.github.io/splunk-operator/ and for Linux follow https://docs.splunk.com/Documentation/Splunk/9.0.1/Installation/InstallonLinux

Where to install it?¶

Splunk App can be installed on Splunk Enterprise Deployment done on K8s or VM. User can install the App using three different ways.

Option 1: Install from File¶

This App can be installed by Uploading the file to the Splunk UI.

- Download the AccuKnox Splunk App file, by typing the following command. This file can be downloaded anywhere from where the user can upload the file to Splunk UI.

git clone https://github.com/accuknox/splunk.git AccuKnox

tar -czvf AccuKnox.tar.gz AccuKnox

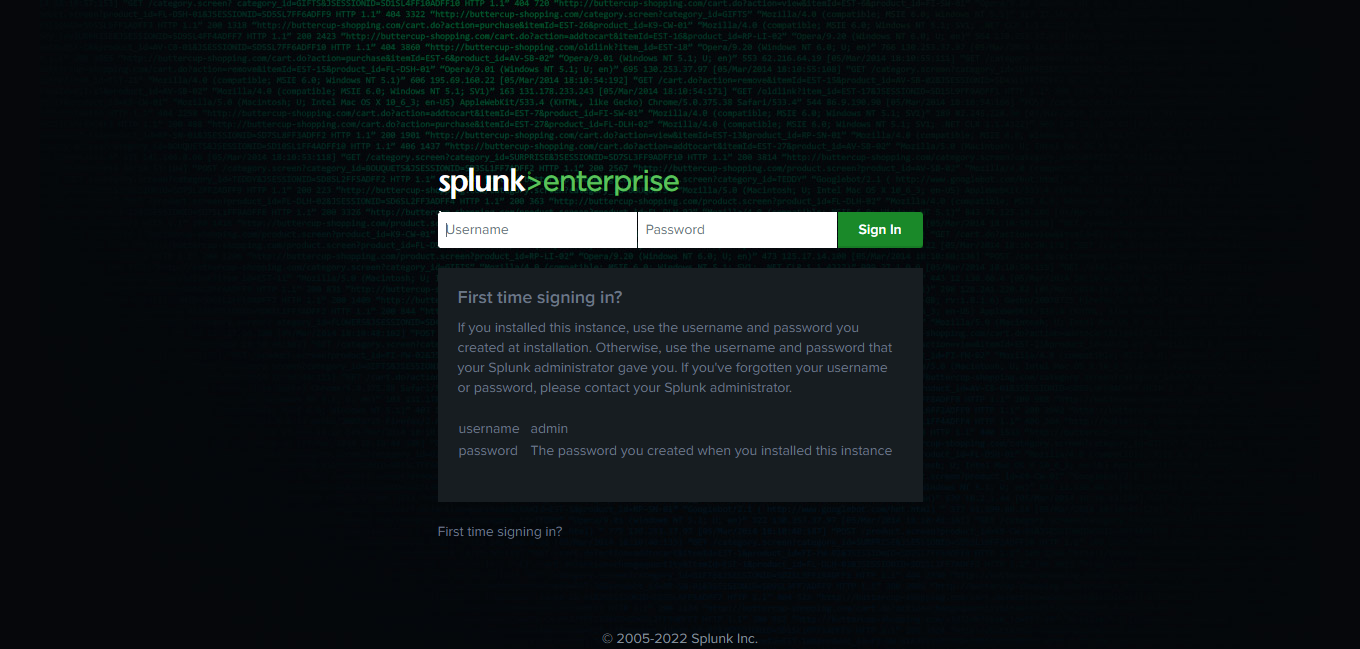

- Log in to your Splunk Deployment.

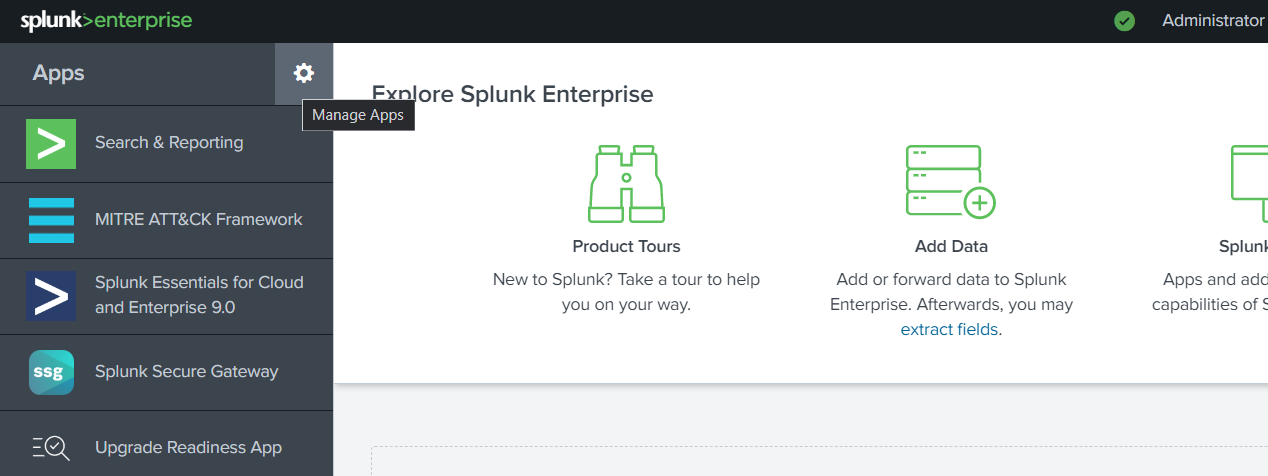

- Click on the gear

icon next to Apps.

icon next to Apps.

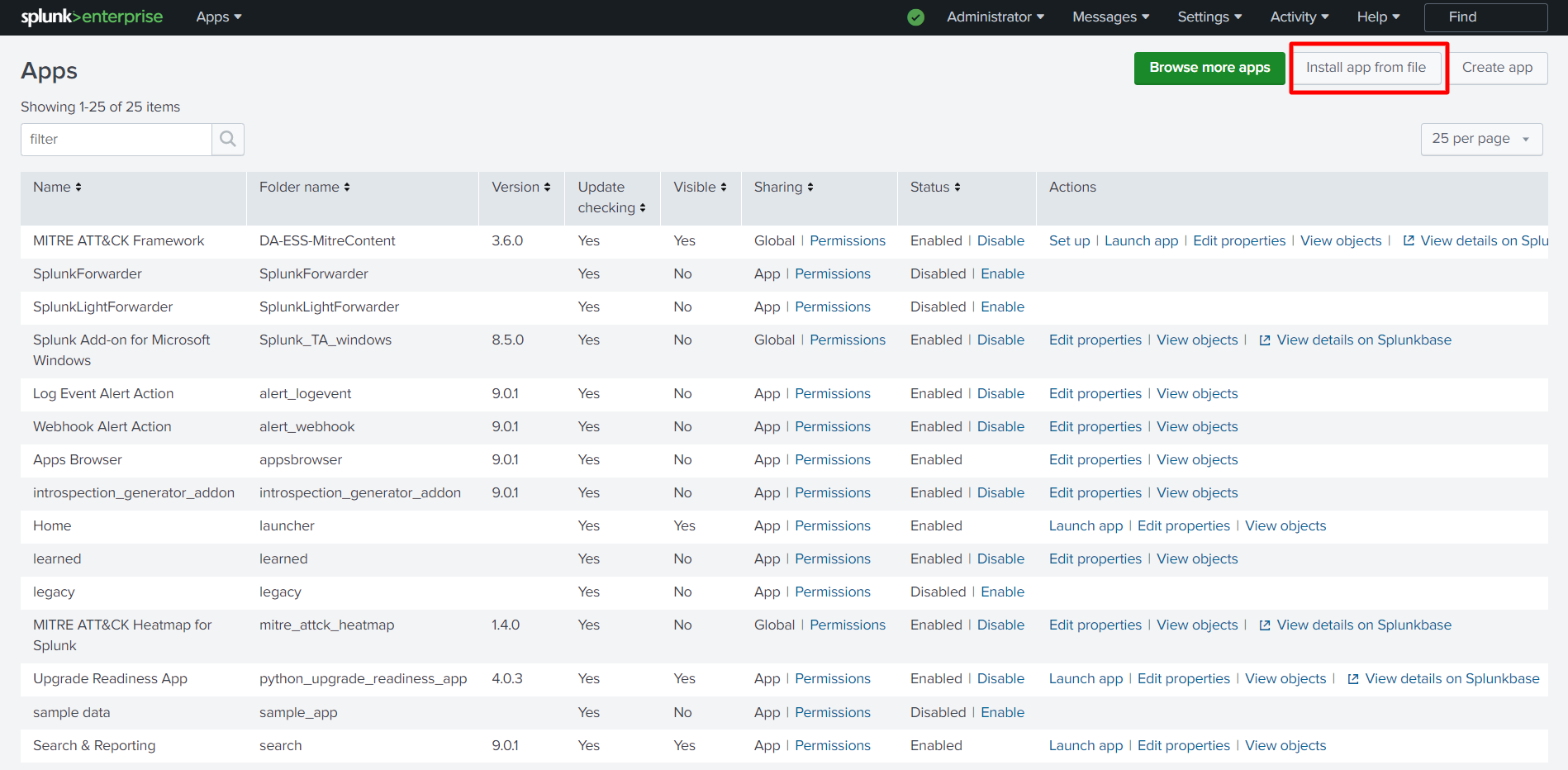

- This will navigate you to the Apps Dashboard. On the top right, click on Install app from file.

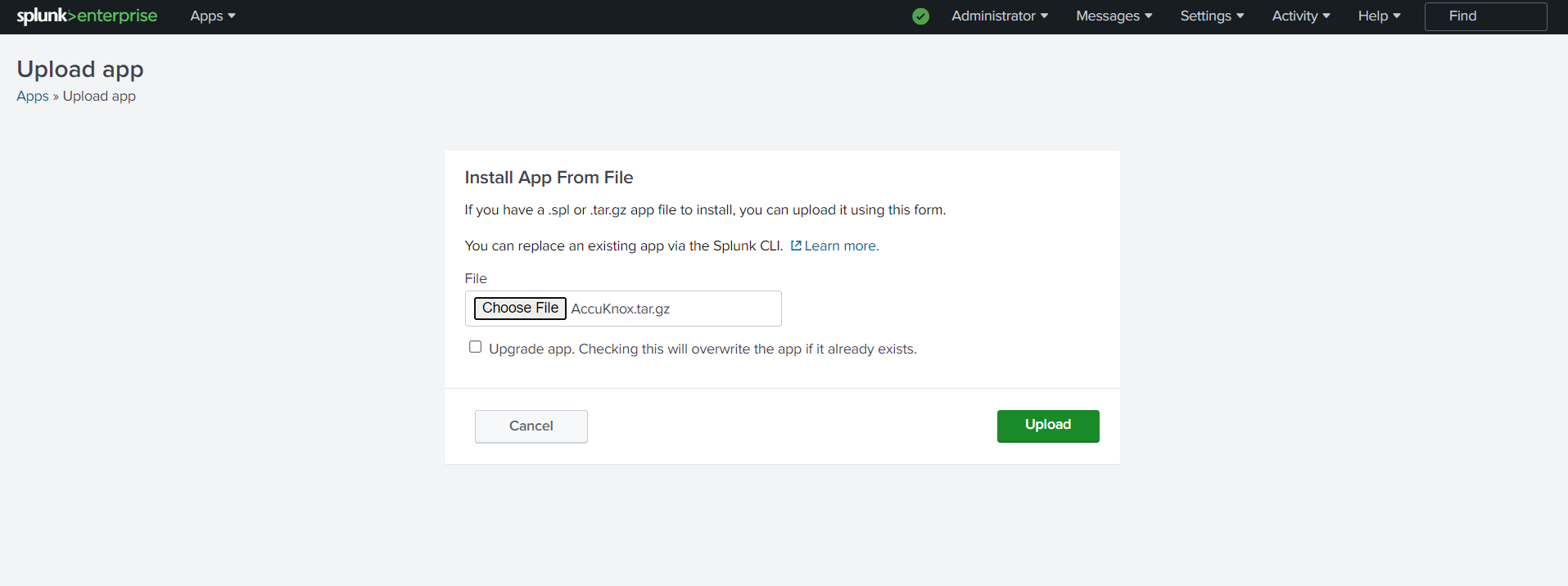

- This will navigate to Upload App Screen. Select AccuKnox.tar.gz file downloaded in the first step, and upload. In case you are updating the app and it’s already installed, mark the check box for Upgrade App.

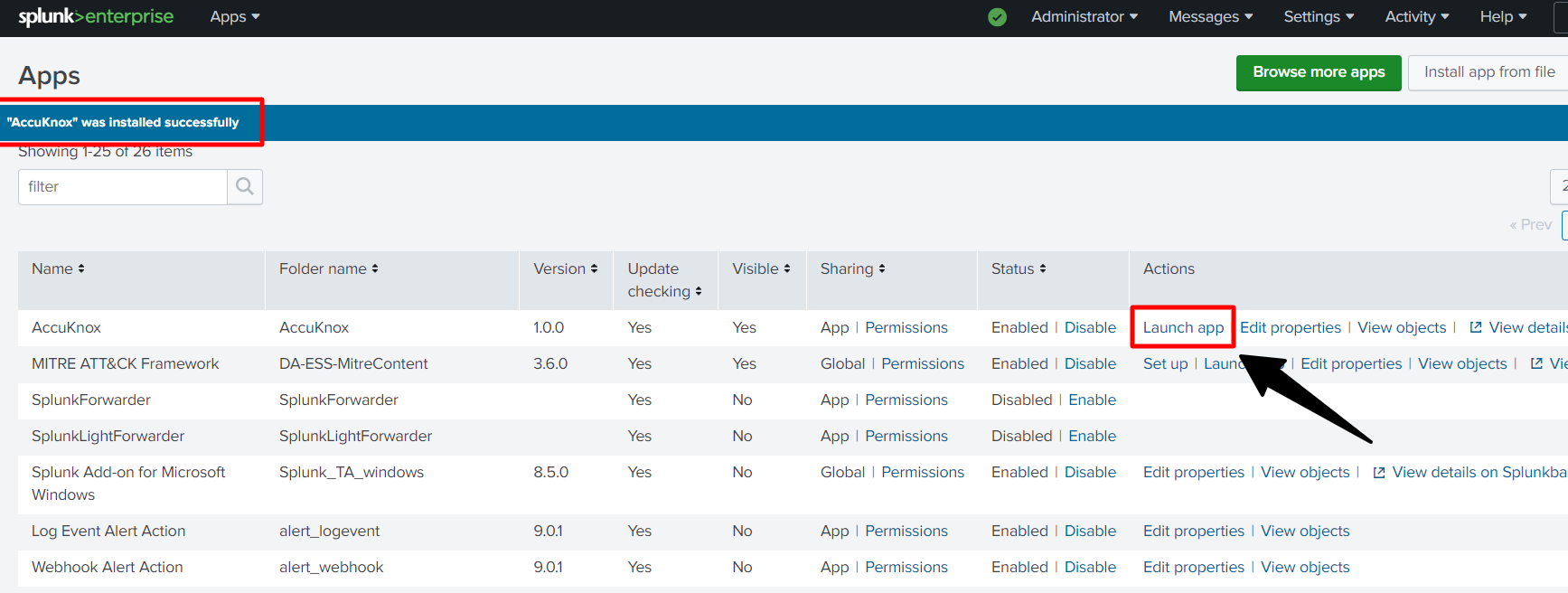

- Once Uploaded the App will be installed on the Splunk Deployment, with a confirmation message, “*****AccuKnox" was installed successfully.* Click on Launch App to view the App.

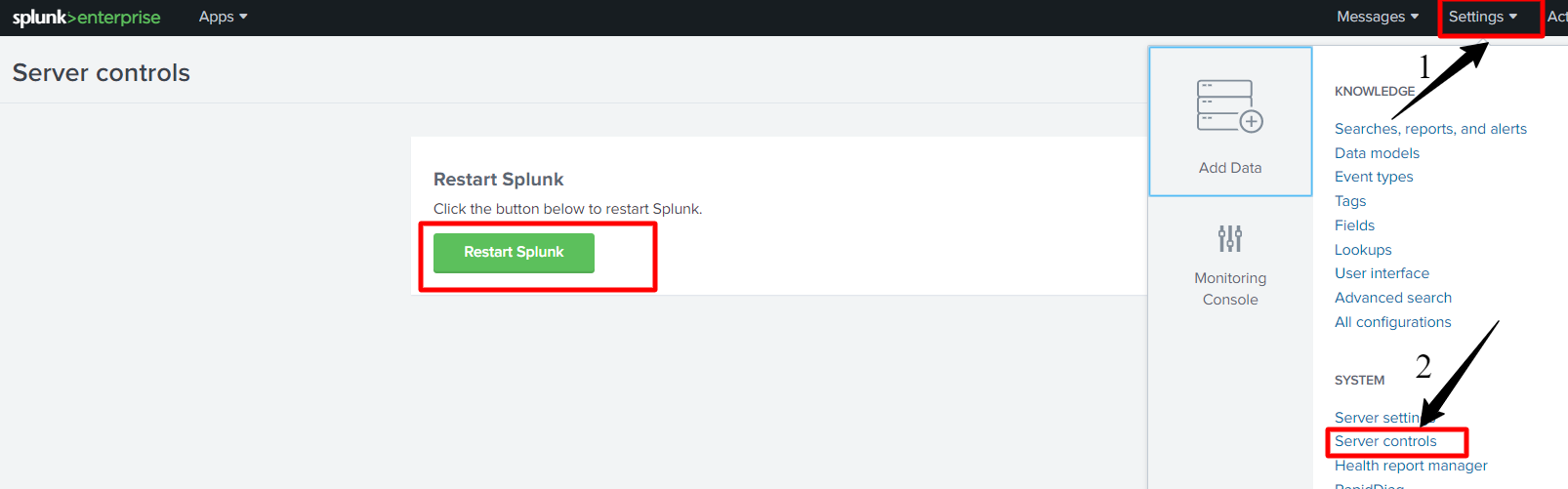

7. You can Restart Splunk for the App to work properly. Go to Settings > Server Control > Restart Splunk, Restarting the app will take approx. 1-2 minutes.

7. You can Restart Splunk for the App to work properly. Go to Settings > Server Control > Restart Splunk, Restarting the app will take approx. 1-2 minutes.

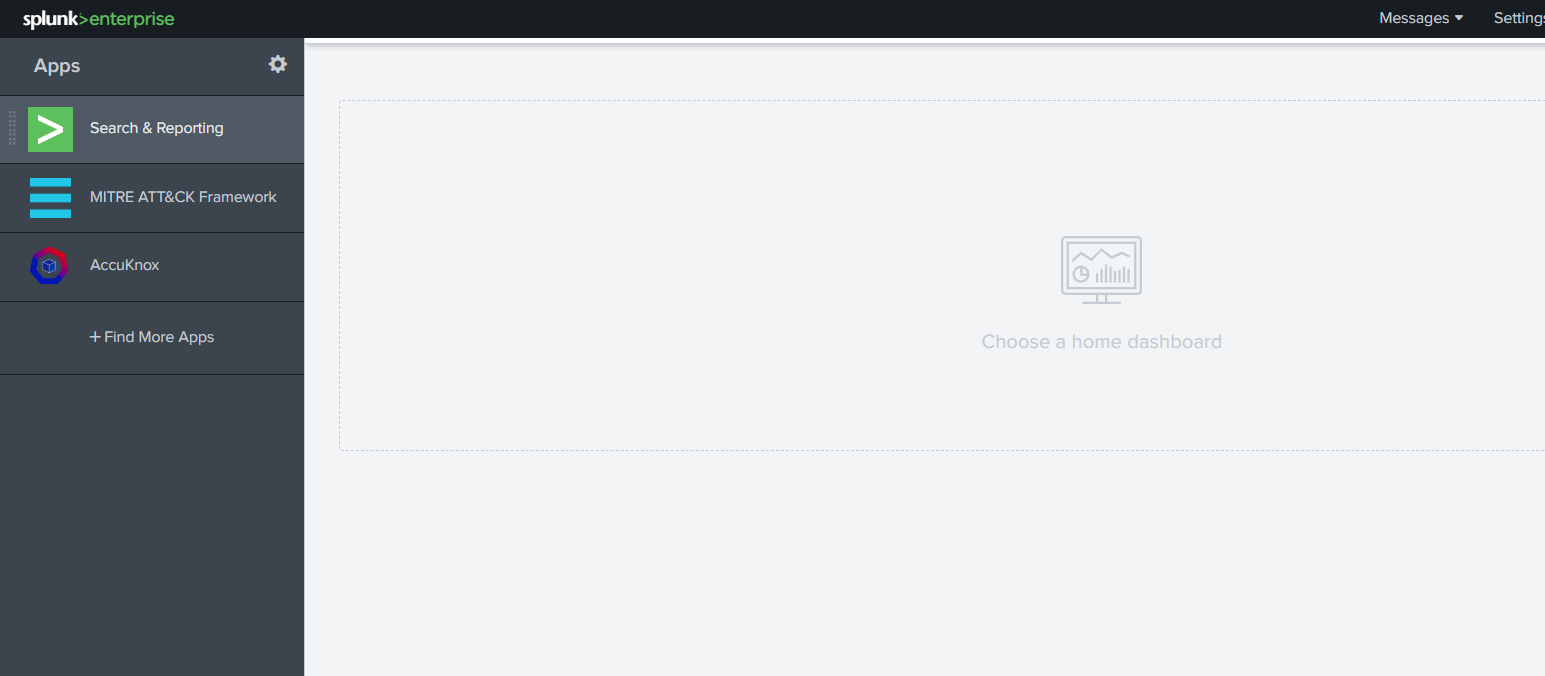

8. Wait for Splunk to Restart And you can log in back to see the AccuKnox App in the App section.

8. Wait for Splunk to Restart And you can log in back to see the AccuKnox App in the App section.

9. Click on the AccuKnox App to launch the App. This will navigate you to the App dashboard.

9. Click on the AccuKnox App to launch the App. This will navigate you to the App dashboard.

Note:

-

If Dashboards shows no data, you need to configure the HEC on Splunk and Forward the data first, check below how to configure and create HEC and forward the data.

-

If data is not being pushed, log in to Splunk > Setting > Data Input > Select HTTP Event Collector > Global Settings > Disable SSL if Enabled by unchecking the box.

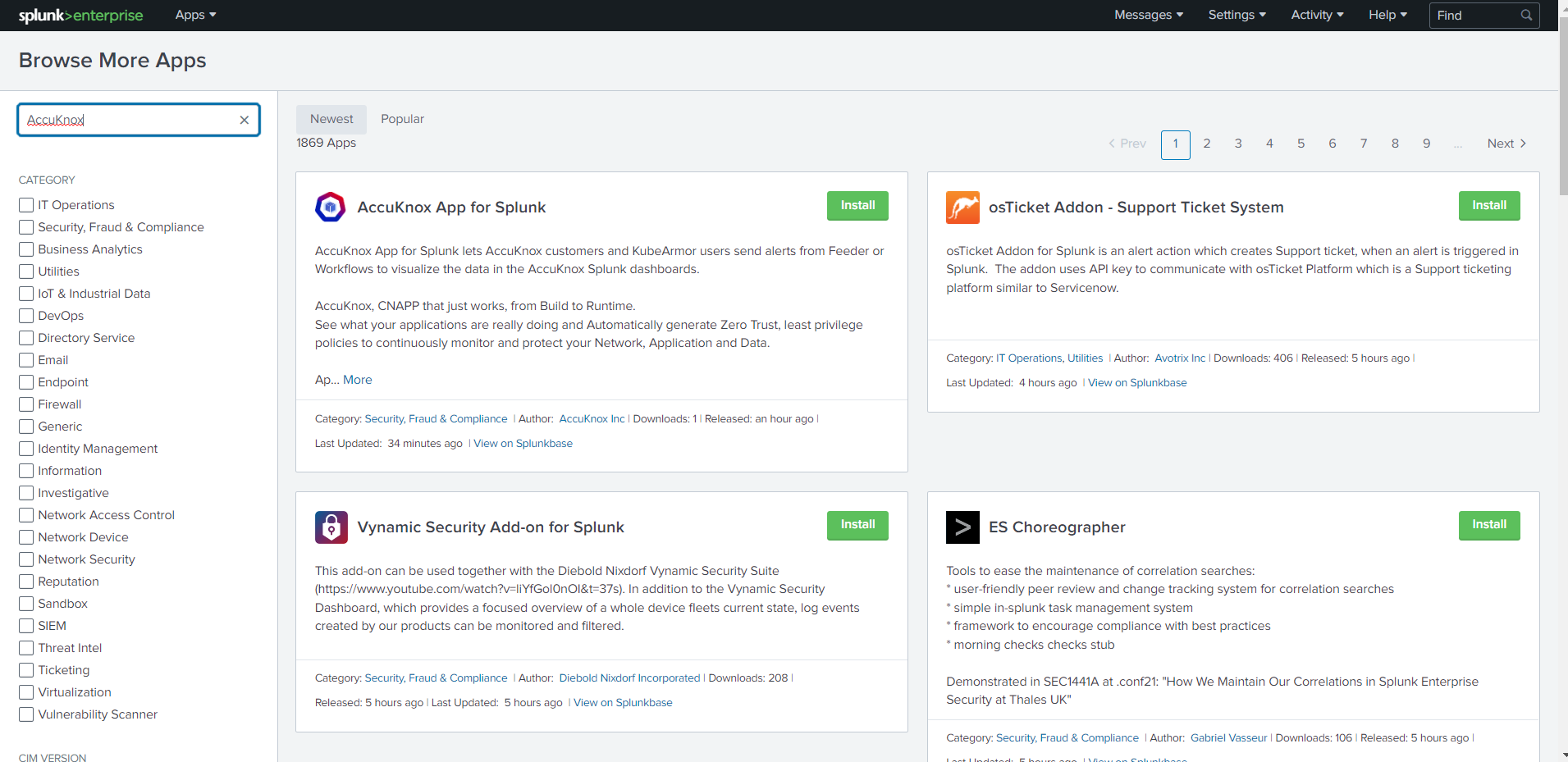

Option 2: Install the App from SplunkBase¶

Install the AccuKnox App by downloading it from the App homepage.

Option 3: Install from GitHub¶

This App is available on SplunkBase and GitHub. Optionally, you can clone the GitHub repository to install the App. Feel free to submit contributions to the App using pull requests on GitHub.

-

Locate the Splunk Deployment done in your environment.

-

Navigate to the Splunk App directory. For Linux users

/opt/splunk/etc/appsand windows users\Program Files\Splunk\etc\apps

From the directory $SPLUNK_HOME/etc/apps/, type the following command:

git clone https://github.com/accuknox/splunk.git AccuKnox

What data types does AccuKnox export/integrate into Splunk?¶

- KubeArmor Alert

- KubeArmor Container Logs

- Cilium Alerts

- Cilium Logs

Managing data sent to Splunk, What can be sent?

AccuKnox can forward the data to Splunk in two ways:

- From feeder service running on client cluster

- From SaaS platform

Forwarding Events to Splunk from Feeder¶

Prerequisites:

-

Feeder Service and KubeArmor are Installed and running on the user’s K8s Cluster.

-

A sample application can be used to generate the alerts, check how to deploy a sample application, and generate alerts.

Configuring feeder for the first time to forward the events:¶

1 . Assuming the user is inside their K8s Cluster, type the following command to edit the feeder deployment.

kubectl edit deployment feeder-service -n accuknox-agents

2 . The below Configuration parameters needs be updated for Splunk configuration. (Default params in code blocks need to be modified, line number 93 of feeder chart )

To start editing press Insert button

name: SPLUNK_FEEDER_ENABLED

value: false

change value to

trueto enable the feed

name: SPLUNK_FEEDER_URL

value: https://<splunk-host>

change value to the

HEC URLcreated.

name: SPLUNK_FEEDER_TOKEN

value: " x000x0x0x-0xxx-0xxx-xxxx-xxxxx00000"

change the value with

generated tokenfor HEC

name: SPLUNK_FEEDER_SOURCE_TYPE

value: "http:kafka"

change the value to

http:kafkaif not added

name: SPLUNK_FEEDER_SOURCE

_

value:_ "json"

change the value as per your choice

name: SPLUNK_FEEDER_INDEX

value: "main"

change the value as to

main

Hit ctrl + c once editing is done, and enter :wq and hit enter to save the configuration.

Additionaly you can Enable and Disable the event forwarding by Enabling/Disabling Splunk (Runtime):

kubectl set env deploy/feeder-service SPLUNK_FEEDER_ENABLED="true" -n accuknox-agents

By enabling the flag to true (as above), the events will be pushed to Splunk. And disabling it to false will stop pushing logs.

Note: Likewise other configuration parameters can be updated in Runtime.

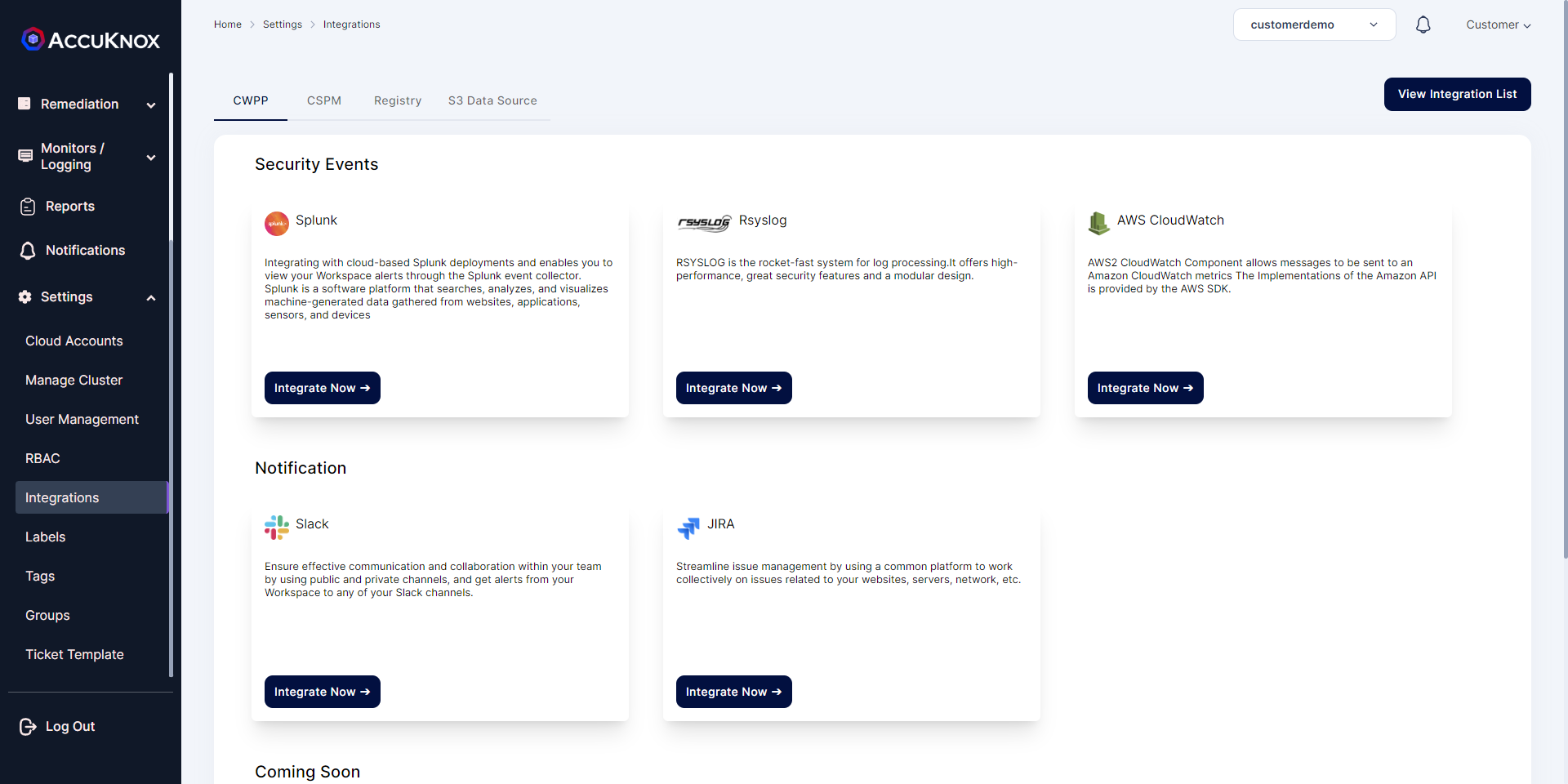

From SaaS, Channel Integration¶

Integration of Splunk¶

1. Prerequisites¶

Set up Splunk HTTP Event Collector (HEC) to view alert notifications from AccuKnox in Splunk. Splunk HEC lets you send data and application events to a Splunk deployment over the HTTP and Secure HTTP (HTTPS) protocols.

-

To set up HEC, use instructions in Splunk documentation. For source type,_json is the default; if you specify a custom string on AccuKnox, that value will overwrite anything you set here.

-

Select Settings > Data inputs > HTTP Event Collector and make sure you see HEC added in the list and that the status shows that it is Enabled .

2. Steps to Integrate¶

-

Go to Channel Integration.

-

Click integrate now on Splunk.

-

Enter the following details to configure Splunk.

-

Select the Splunk App : From the dropdown, Select Splunk Enterprise.

-

Integration Name: Enter the name for the integration. You can set any name. e.g., Test Splunk

-

Splunk HTTP event collector URL: Enter your Splunk HEC URL generated earlier. e.g https://splunk-xxxxxxxxxx.com/services/collector

-

Index: Enter your Splunk Index, once created while creating HEC. e.g main

-

Token: Enter your Splunk Token, generated while creating HEC URL. e.g

x000x0x0x-0xxx-0xxx-xxxx-xxxxx00000 -

Source: Enter the source as

http:kafka -

Source Type: Enter your Source Type here, this can be anything and the same will be attach to the event type forwarded to splunk. e.g

_json -

Click Test to check the new functionality, You will receive the test message on configured slack channel. e.g

Test Message host = xxxxxx-deployment-xxxxxx-xxx00 source = http:kafka sourcetype = trials

-

-

Click Save to save the Integration. You can now configure Alert Triggers for Slack Notifications.

How will AccuKnox manage the Splunk data—what will be sent & what will not be sent?

Managing what type of data can be sent to Splunk?¶

From AccuKnox we can manage the type of data forwarded to integration using triggers.

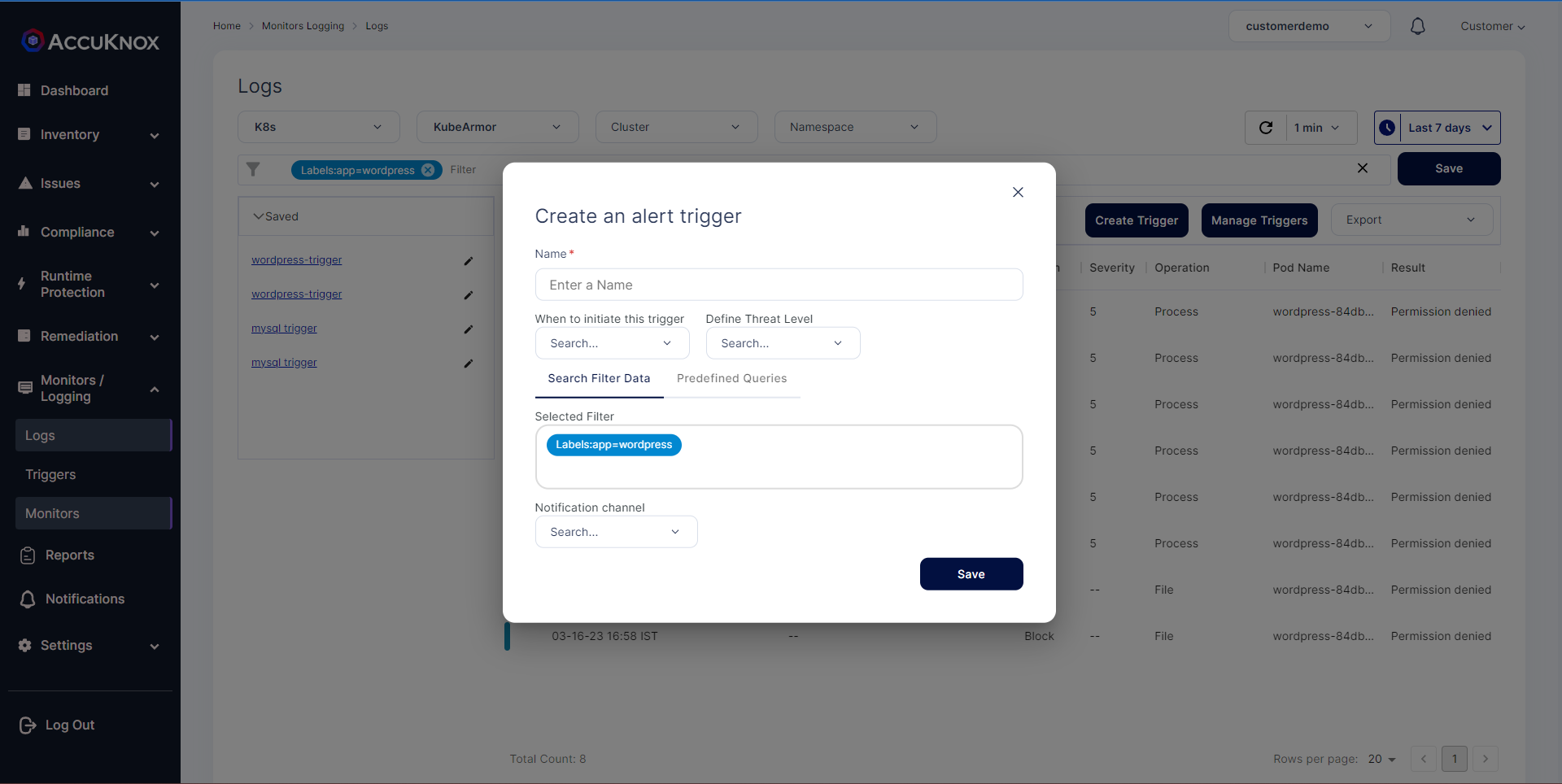

How to create a new trigger?¶

-

After choosing specific log filter from the Logs Screen, click on

Create Triggerbutton. You can either click elements directly from the log events list, search for elements directly in the filter, or use Search Filters to choose a specific log filter -

Configure the required options:

-

Name: Define an alert trigger name.

-

When to initiate this trigger: Set the frequency of the trigger. You have four options to select, (1) Runtime as it happens (2) Once a day (3) Once a week (4) Once a month

-

Define Threat Level: Define the threat level for the trigger. You have three options (1) High (2) Medium (3) Low

-

Selected Filter: The chosen log filter from step 1 is populated here. You can shift to predefined filters from here also.

-

Notification channel: Choose the notification channel that should receive the alerts.

Note: Before selecting the notification channel, you should complete the channel integration for this channel. Review the Channel Integration for more context. Channel Integration Guide

- Click

Savebutton to store the trigger in database.